Human-Centric Cybersecurity: Why Secure Behaviour Is the New Security Perimeter

Feb 24

Get in touch with our HRM Specialists

Human behaviour, rather than technical weaknesses, increasingly drives cybersecurity failures. Research consistently shows that human actions, including social engineering responses, errors, and misuse, are central to successful attacks. Verizon’s 2025 DBIR indicates that around 60% of breaches involve a human element, highlighting the dominant role of user behaviour in security outcomes.

Academic research similarly concludes that technological safeguards alone cannot address vulnerabilities created by human decision-making and organisational context. Effective protection requires a human-centric approach that integrates psychological, social, and technical factors, recognising that security outcomes depend on how people interact with systems in real conditions, not just on the strength of the systems themselves.

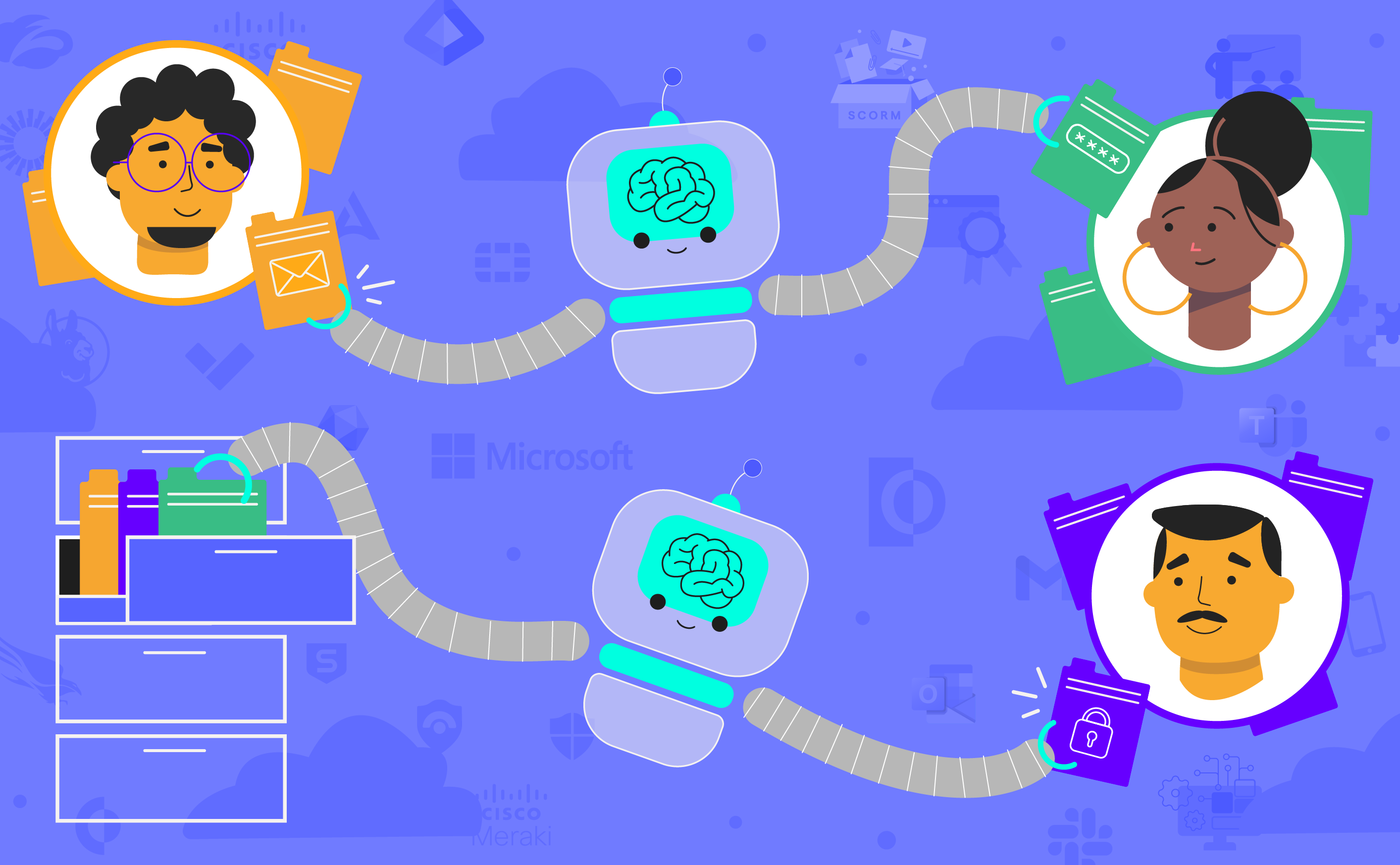

As organisations recognise this shift, structured initiatives such as Security Behaviour & Culture Programs (SBCP), identified by Gartner as a key cybersecurity trend, are emerging to influence employee behaviour systematically. Gartner recommends frameworks like PIPE (Practices, Influencers, Platforms, Enablers) provide implementation guidance, while Security Behaviour Management (SBM) platforms enable continuous measurement and intervention. Thus, positioning solutions such as OutThink as foundational components of modern cyber resilience strategies.

The Shift to Human-Centric Security

For a long time, cybersecurity was treated as a technology arms race with better firewalls, smarter antivirus, and more monitoring. If something went wrong, the assumption was that the tools weren’t strong enough yet. But over the past few years, it has become clear that even the best technology can’t compensate for what people actually do day to day. A single rushed decision, a moment of distraction, or a perfectly timed message can undo layers of technical protection. In other words, real security depends heavily on everyday security behaviour across the organisation, and less on the strength of the technical layers of defence.

Human-centric security starts from this reality. Instead of assuming employees will follow policies perfectly, it asks a simpler question: how do people actually behave when they’re busy, under pressure, or just trying to get their job done?

Research shows that decisions are shaped by routine, workplace culture, and social context as much as by rules on paper. If secure behaviour feels impractical or slows down work, people will find ways around it, not because they want to break rules but because they’re trying to be productive. That’s why effective cyber resilience has to work with human behaviour and individual context, not ignore it.

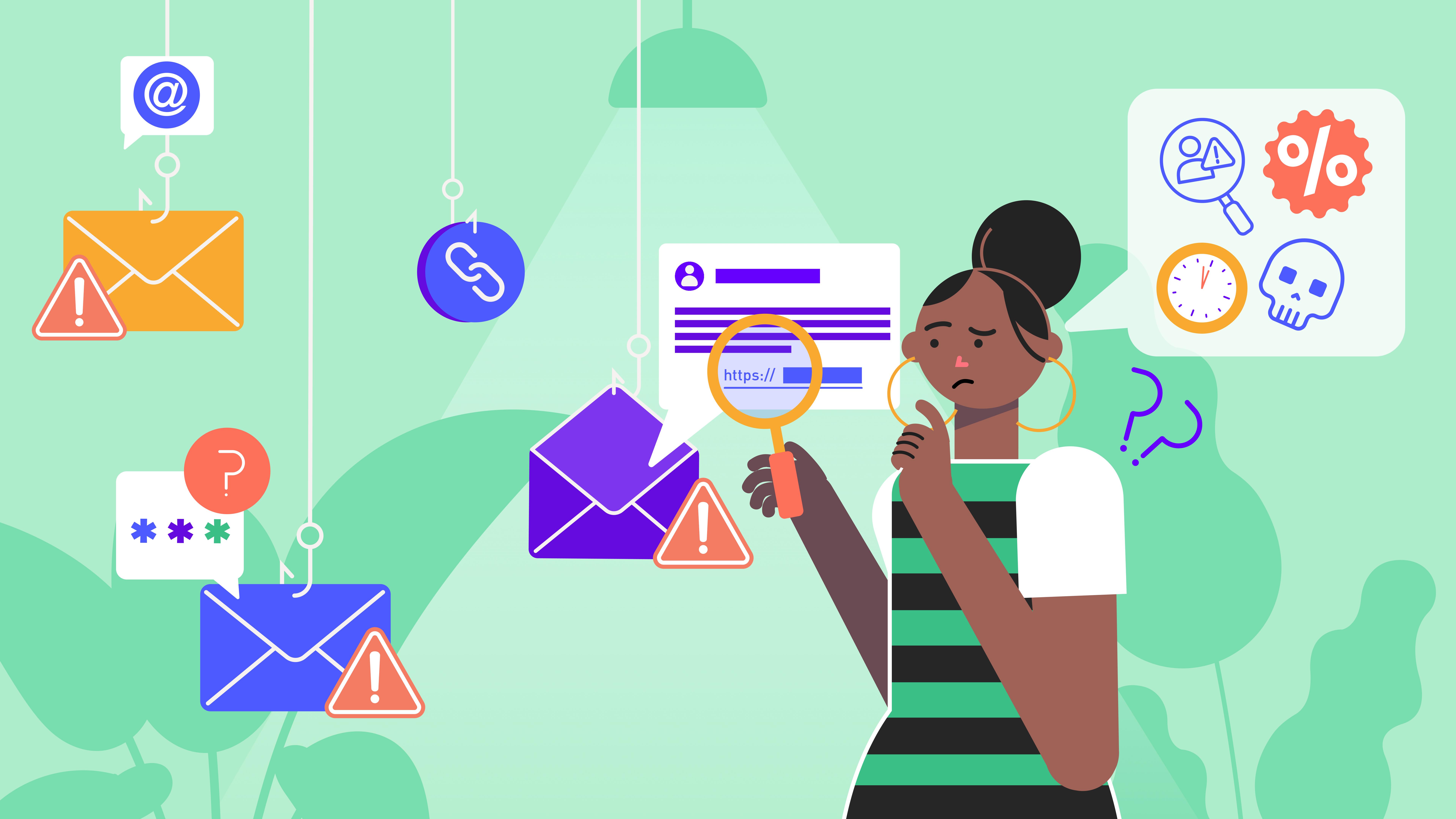

Attackers exploit the gap between how security policies assume people will behave and how they actually behave under real-world conditions. Social engineering campaigns rely on trust, urgency, and familiarity to prompt actions that feel legitimate in the moment. Rather than attacking systems directly, they target the people who operate them, knowing that behaviour is often the easiest path into an organisation.

As a result, organisations are shifting toward long-term efforts to strengthen security behaviour, not just awareness. Security Behaviour and Culture Programs (SBCP) aim to make safe actions routine, embedding secure behaviour into everyday work rather than treating it as occasional training.

Understanding that behaviour matters is just the first step. To change outcomes, organisations need to understand how everyday human factors actually contribute to failures in the first place.

Human Factors in Cybersecurity Failures

Many cybersecurity incidents don’t start with sophisticated malware or advanced exploits; they start with ordinary human actions. Mistakes such as weak passwords, misconfigurations, accidental data sharing, or falling for social engineering can undermine even well-designed systems.

In this sense, human behaviour can either reinforce security controls or quietly weaken them from the inside.

Pressure makes this worse. When people are tired, overloaded, or working against deadlines, they tend to rely on quick, instinctive decisions rather than careful checks. Behavioural research, popularised by Daniel Kahneman’s Thinking, Fast and Slow, shows that humans use two modes of thinking: a fast, automatic system for routine decisions and a slower, analytical system for deliberate reasoning. Under stress or time pressure, people default to the fast mode, which relies on intuition and shortcuts rather than detailed evaluation.

In everyday work environments, this means risky actions often happen not because employees intend to ignore security, but because they are operating on autopilot. Approving a request, clicking a link, or bypassing a step may feel reasonable in the moment. As a result, many security failures are a by-product of normal human decision-making under pressure rather than deliberate negligence.

Confidence can also be misleading. Many users believe they can spot suspicious messages or activity, but constant exposure to warnings can lead to alert fatigue. Over time, notifications blend into the background, and important signals may be ignored simply because there are too many of them. This desensitisation reduces the effectiveness of security tools that depend on user attention.

Finally, people naturally prioritise convenience. If security processes are slow, confusing, or disruptive, employees may develop workarounds to keep tasks moving. These unofficial shortcuts, such as sharing credentials or bypassing procedures, can introduce new vulnerabilities. Research highlights that usability and organisational culture strongly influence whether secure behaviour is followed in practice.

If human behaviour/factors create potential vulnerabilities, then security postures must be designed to account for them, not simply assume they can be eliminated through policy alone.

Designing Security That Works for Humans, Not Against Them

Security measures are most effective when they fit naturally into how people work. Research on organisational resilience shows that policies that are clear, practical, and aligned with daily tasks are far more likely to be followed. When rules feel complicated or disruptive, employees may ignore them or develop workarounds, unintentionally weakening protection. Practicality, therefore, plays a critical role in whether secure behaviour is sustained in practice.

Human factors research also shows that individual characteristics, such as attitudes, impulses, and habits, influence how people engage with security practices. Employees do not interact with systems as neutral users; their behaviour is shaped by personality, awareness, and context. Understanding these differences is important because risky actions often arise from normal behaviour patterns rather than deliberate misconduct.

Cognitive demands further affect performance. Studies on workload and human performance indicate that complex or mentally taxing tasks increase the likelihood of errors. When security processes require sustained attention or complicated steps, mistakes become more likely, especially under pressure. Simplifying tasks and providing appropriate support can help users maintain safer behaviour.

Ultimately, security works best when it requires minimal extra effort. When protective actions are integrated into normal workflows, employees are less tempted to bypass controls. Aligning security with real working conditions makes secure behaviour easier to maintain consistently over time.

Yet even the most human-centred design will fail if the surrounding culture does not support it. Sustained secure behaviour emerges not just from systems or policies, but from the signals employees receive every day about what truly matters.

Building a Human-First Security Culture (SBCP in Practice)

Creating a strong security culture is less about written policies and more about what people see leaders actually prioritise. Research shows that employees tend to mirror management behaviour; when senior leaders visibly follow secure practices and treat security as part of business operations, staff are far more likely to do the same. In this sense, culture is set from the top, and leadership actions signal whether security behaviour is truly valued or simply expected as a to-do.

Influence also spreads through peers. Trusted colleagues within teams often shape day-to-day behaviour more effectively than central directives. Security champions can translate policies into practical guidance, reinforce expectations, and encourage secure behaviour in context. This peer-driven model helps embed security norms across the organisation rather than confining responsibility to the security team alone.

Sustainable change requires continuous engagement, not one-off training. Short, targeted interventions are more effective at maintaining awareness and reinforcing secure behaviour over time. Modern Security Behaviour and Culture Programs increasingly rely on behavioural insights and adaptive learning capabilities provided by Security Behaviour Management (SBM) platforms such as OutThink, to deliver relevant guidance when risk signals emerge rather than after incidents occur.

Equally important is the tone of the programme. Cultures built on blame can discourage reporting, leaving risks hidden until they escalate. Positive reinforcement, feedback, and support encourage openness and cooperation, enabling organisations to learn from mistakes and strengthen resilience. Behaviour-driven approaches aim to make secure behaviour visible, measurable, and continuously improved rather than enforced through punishment.

Preventing such incidents requires more than hindsight. Organisations need ways to detect and address risky behaviour before it escalates into a breach.

Human Risk & Behaviour-Driven Incidents

Many large-scale cyber incidents follow predictable human-centric patterns: social engineering, credential theft, insider access misuse, and routine errors under pressure.

A prominent example is the September 2023 ransomware attack on MGM Resorts by the Scattered Spider group. Attackers used voice phishing (“vishing”) to convince an IT help-desk employee to reset credentials, gaining privileged access without exploiting a technical vulnerability. The breach crippled hotel operations for about 10 days, leaving room keys, reservation systems, and casino machines inoperable, showing how a single manipulated interaction can cascade into enterprise-wide disruption. MGM later estimated roughly a $100 million impact on quarterly results, alongside remediation costs and the ensuing legal fallout.

A related incident struck Caesars Entertainment in the same month. Attackers again relied on social engineering to obtain employee credentials and one-time authentication codes, bypassing multi-factor protections. Caesars reportedly paid about $15 million to prevent the release of stolen customer data. These cases highlight a recurring pattern: attackers increasingly target people rather than systems because human behaviour is often the weakest link in otherwise secure environments.

Because such incidents exploit predictable responses, strengthening security behaviour can materially reduce exposure. Research emphasises that cybersecurity failures frequently stem from human decision-making and organisational context, meaning technical controls alone cannot address all risks. Integrating human-centric measures with technology improves resilience by closing behavioural gaps that attackers repeatedly exploit.

Behaviour-focused interventions also lower error rates and improve compliance. Studies show that improving cybersecurity behaviour and “security hygiene” reduces the likelihood of mistakes that enable breaches, helping organisations prevent incidents before they escalate. Addressing how people interact with systems and not just the systems themselves is therefore essential for reducing cyber risk at scale.

Measuring human risk is useful, but metrics alone do not change outcomes. The next challenge is turning insight into sustained action across the organisation.

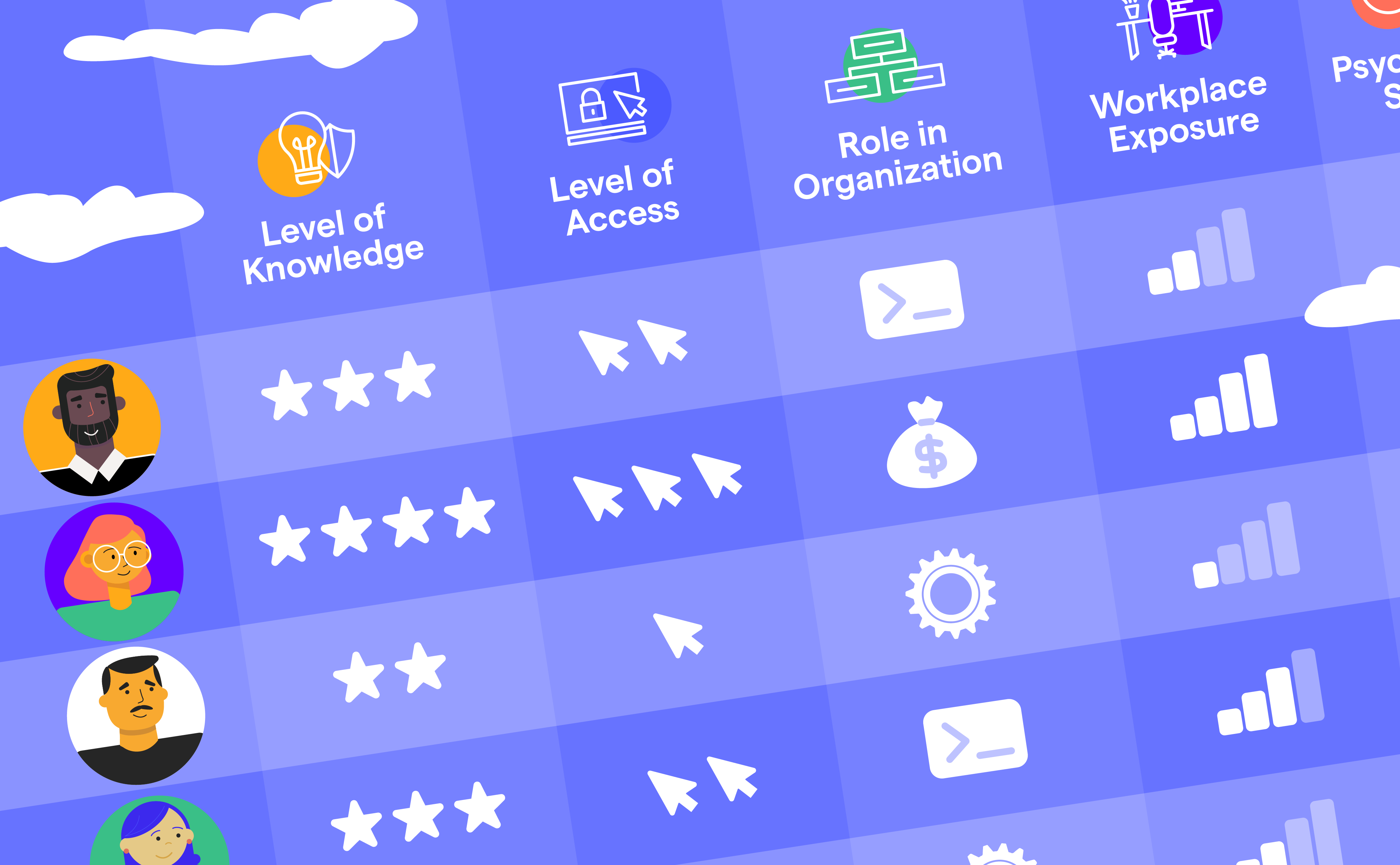

What Organisations Can Actually Track Today

While predicting human behaviour in theory is difficult, organisations can measure several practical indicators that reflect real security risk. Instead of relying on personality traits or abstract models, mature programmes focus on observable actions, like how employees respond when confronted with potential threats. Behaviour-based metrics provide more reliable insight because they capture what people actually do, not what they say they would do.

One of the clearest signals is reporting behaviour. The frequency with which employees report suspicious emails or messages and how quickly they do so indicates awareness and engagement. Faster reporting can significantly reduce attacker dwell time by allowing security teams to respond before an incident spreads.

Response patterns during simulated attacks also reveal risk levels. Phishing simulations, for example, show whether users click malicious links, ignore suspicious content, or report it. Tracking trends over time helps organisations determine whether training and communication efforts are actually improving behaviour rather than simply increasing knowledge.

Broader engagement indicators provide additional context. Participation in security initiatives, willingness to follow procedures, and openness in reporting mistakes all reflect the strength of the organisation’s security culture. These measurable signals allow programmes to adapt continuously, shifting from one-time assessments toward ongoing management of human risk.

Together, these practical indicators offer a realistic path toward understanding and reducing human-driven cyber risk.

Turning Human-Led Security Into Action

Improving security outcomes requires more than awareness campaigns. Gartner describes SBCP as structured initiatives designed to influence employee behaviour continuously, rather than relying on periodic training alone. These programmes integrate communication, leadership engagement, and organisational practices to make secure behaviour part of everyday work. Culture, in terms of how people actually act under pressure or routine conditions, becomes a critical layer of defence.

To operationalise SBCP, implementation approaches such as the PIPE framework emphasise alignment across multiple dimensions: practical policies, influential leaders and peer networks, enabling platforms, and organisational support mechanisms. This combination helps translate strategy into daily routines, ensuring behaviour change persists rather than fading after initial efforts. Without reinforcement across these areas, improvements are often short-lived.

Technology plays a supporting role by making human risk visible and manageable. Security Behaviour Management (SBM) platforms can monitor behavioural trends, provide targeted feedback, and track progress over time.

Solutions such as OutThink are designed to support SBCP programmes by helping organisations measure secure behaviour and intervene early when risk increases, moving from reactive response to proactive risk management.

In modern cyber attacks, the shortest path to the network is usually through a human.

Understanding why awareness alone doesn't prevent this is the starting point — our piece on why training fails and what actually reduces human cyber risk makes the case.

Sources

- https://www.verizon.com/business/resources/reports/dbir/

- https://link.springer.com/article/10.1007/s10207-025-01032-0

- https://www.recyber.com/human-behaviour-cybersecurity-risk/

- https://hoxhunt.com/blog/gartner-names-hoxhunt-a-security-behavior-and-culture-change-program-representative-provider

- https://pmc.ncbi.nlm.nih.gov/articles/PMC11861440/

- https://www.scirp.org/journal/paperinformation?paperid=143067

- https://pmc.ncbi.nlm.nih.gov/articles/PMC5501883/

- https://pmc.ncbi.nlm.nih.gov/articles/PMC8195225/

- https://www.researchgate.net/publication/359924870_Understanding_of_Human_Factors_in_Cybersecurity_A_Systematic_Literature_Review

- https://specopssoft.com/blog/mgm-resorts-service-desk-hack/

- https://en.wikipedia.org/wiki/Scattered_Spider

- https://link.springer.com/article/10.1007/s10207-025-01032-0

- https://www.sciencedirect.com/science/article/pii/S0167404824003304

- https://kymatio.com/blog/human-risk-cybersecurity-kpis-dashboard

- https://right-hand.ai/blog/measuring-human-risk-in-employee-phishing-resilience

- https://www.livingsecurity.com/blog/cybersecurity-metrics-that-matter-measuring-behavior-engagement-motivation

- https://en.wikipedia.org/wiki/Thinking,_Fast_and_Slow#:~:text=Thinking%2C%20Fast%20and%20Slow%20is,thesis%20is%20a%20differentiation%20between