How to Spot AI‑Generated Videos: Why Detection Now Depends on Human Judgement, Not Visual Clues

Feb 25

Get in touch with our HRM Specialists

The challenge in 2026 is no longer identifying poor-quality deepfakes.

It is understanding why highly realistic AI-generated videos are trusted even when nothing explicitly looks wrong.

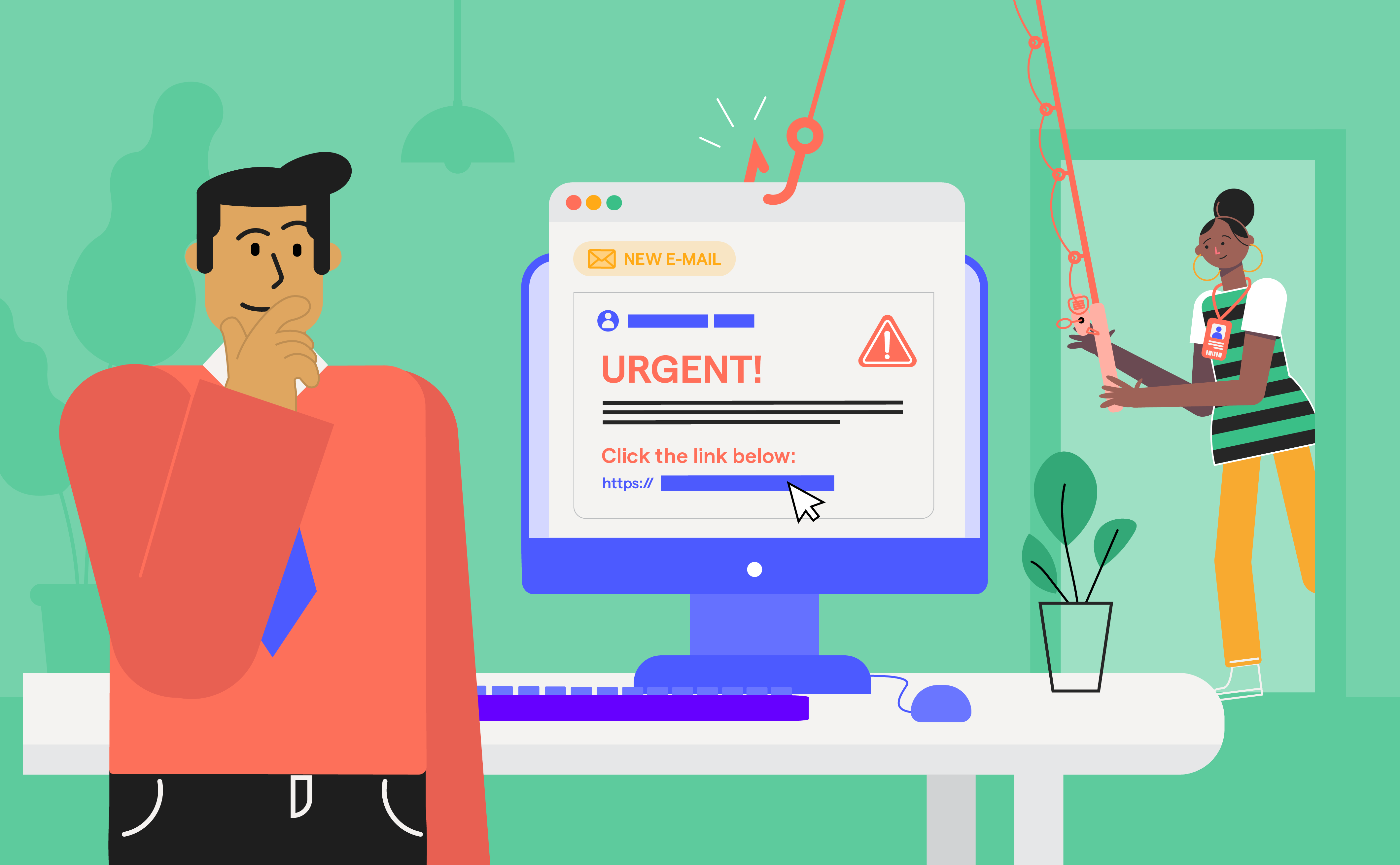

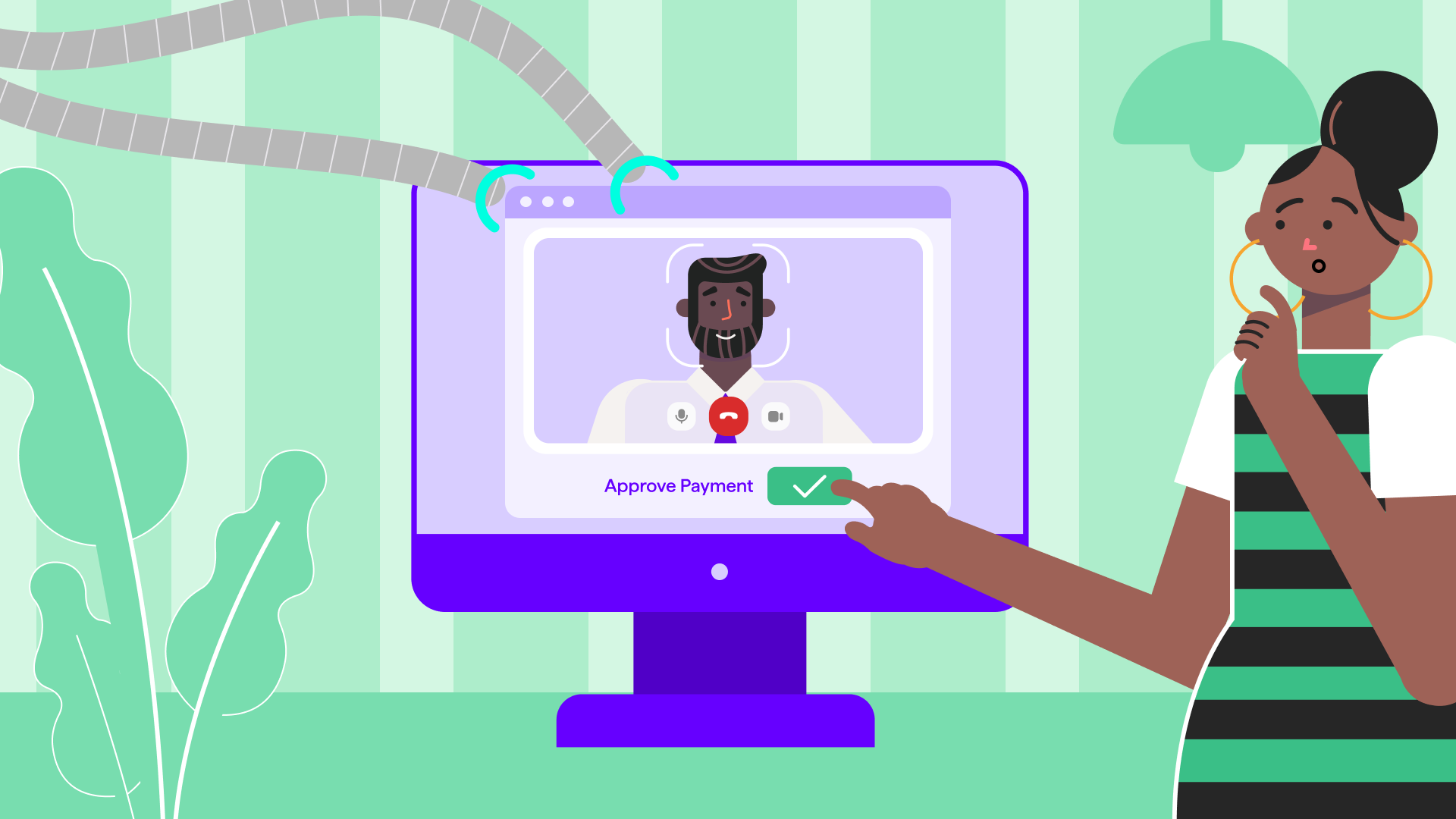

Synthetic video risk now emerges in routine organisational moments: a familiar executive appearing on a video call, a trusted vendor sending a short confirmation clip, or a “quick approval” requested under urgency. As generative video tools compress production time and increase contextual realism, detection shifts away from visual artefacts toward human judgement under pressure.

This analysis reframes AI video detection as a behavioural and organisational risk problem, not a visual literacy issue. It examines how modern AI videos are created, why traditional spotting advice fails, and what enterprises must measure, train, and design for as synthetic video becomes operationally usable by attackers.

How AI-generated videos became believable at work

Not long ago, AI-generated video felt like a tech curiosity, something you’d see in a flashy demo but luckily never in your inbox. That is no longer true. Today, synthetic videos aren’t just better; they’re believable, and that makes all the difference in workplaces where trust and speed drive decisions.

Part of the reason is the sheer volume and sophistication of these fake clips. Deepfakes now show up in around 6.5% of fraud cases globally, that’s about 1 in 15 attacks, and this figure has jumped a staggering 2,137% since 2022. That’s not a slow uptick, that’s a massive wave.

What’s even more concerning is how poorly we humans perform when tasked with spotting them. In controlled tests, people could correctly identify high-quality deepfake videos only 24.5% of the time, which means that viewers are being fooled more often than not. Even when performance improves in image-based tests, the failure rate still sits at 38%, which in a corporate environment means more than one in three decisions about authenticity could be incorrect. In audio experiments, participants believed they were around 73% accurate, yet were repeatedly misled by subtle machine-generated cues. The bigger risk isn’t just misjudgment, it’s overconfidence.

These numbers further align with global findings. The 2026 International AI Safety Report, cited by The Guardian, notes that AI-generated content has become harder to distinguish from real media compared to just a year earlier. In early 2025, 46% of deepfake incidents used video as the primary medium, and Cyble reports that over 30% of high-impact corporate impersonation attacks involved AI-powered deepfakes.

In real organisations, these two forces collide: deepfake videos are becoming common enough to be weaponised, and we’re surprisingly bad at detecting them. That’s why these videos “work” - not because they look perfect, but because they feel right to human viewers when they arrive in the middle of real workflows.

How modern AI videos are actually built

To understand why AI-generated videos are now so convincing, it helps to break down how today’s synthetic content is created. Modern deepfake systems don’t just copy a face, they build an entire believable scenario that feels “normal” to human viewers.

Identity Seeding

Enterprise attackers kick off with easy public data dumps, like LinkedIn headshots, conference recordings, podcasts, and earnings call videos. Modern AI models ingest this material to map facial structure, skin tone, voice quality, and subtle visual quirks from multiple angles. No insider access is needed. The more high-quality material available online, the sharper and more believable the synthetic identity becomes. This seeding phase is the foundation; without it, the illusion collapses.

Behavioural Cloning

Once the identity looks right, AI shifts focus to behaviour. Models learn how a person speaks, pauses, emphasises points, and projects confidence by analysing real recordings. These patterns are then reproduced in synthetic video, making the speaker feel familiar rather than artificial. This is why deepfake videos often “sound exactly like” the real person, and why people trust the delivery before questioning the request.

Contextual Stitching

AI weaves the clone into realistic settings: office backdrops, situation-specific jargon, lighting, urgency cues like "urgent wire transfer." Tools blend edges seamlessly, tricking your brain into thinking they fit your workflow and instantly lowering skepticism. The video is then placed into a believable organisational moment. AI layers office-style backgrounds, internal language, realistic lighting, and urgency cues that match everyday work scenarios. The result doesn’t feel staged - it feels routine. When content fits naturally into existing workflows, people stop asking why it exists at all. Context does most of the convincing before logic has a chance to intervene.

Multimodal Layering: Finally, everything is synchronised. Facial movement, voice delivery, timing, and narrative flow are generated together, so nothing feels out of sync. Audio reinforces visuals, expressions match tone, and pacing feels human. Research shows that when these signals align, humans struggle to spot manipulation. The interaction feels coherent, credible, and real, even when it isn’t

Why people fail to spot AI videos even when nothing looks wrong

The uncomfortable truth about AI-generated video isn’t that it’s invisible. It’s that most of the time, we’re not actually looking for it.

Humans don’t inspect content by default, we respond to it. Research published by CACM shows that when people are asked to distinguish real media from AI-generated media, performance sits at or near chance, around 51%. In other words, our gut instinct is barely better than flipping a coin. Even more telling: participants who claimed they were familiar with deepfakes didn’t perform any better than those who weren’t. Familiarity breeds confidence, not accuracy!

This is where familiaridentities short-circuit doubt. When a face looks like a colleague, a manager, or a known executive, our brains switch from verification mode to trust mode. Studies consistently show that people overestimate their ability to detect manipulation and rely heavily on recognition and social cues instead of deliberate checks. If the person “looks right” and “sounds right,” critical thinking often gets switched off.

Add authority and urgency, and verification collapses altogether. Requests framed as time-sensitive or coming from leadership trigger automatic compliance behaviours, especially in professional environments where responsiveness is rewarded.

Finally, multimodal realism breaks checklist thinking. Research on audiovisual deepfakes shows humans perform significantly worse when video and audio are presented together. Our brains process these cues as a single coherent experience, not as separate elements to be analysed. Simple red flags like pixel glitches, odd lighting, unnatural motion, fail when everything aligns just well enough.

The result? AI videos don’t need to deceive the eye. They just need to fit the moment. And that’s exactly why they work.

How to spot AI generated videos (it's not pixel peeping anymore)

If you’re still trying to spot AI videos by squinting at faces or hunting for visual glitches, you’re already playing the wrong game. Modern deepfakes don’t fail because they look fake, they succeed because they make sense in the moment. Spotting them today is less about seeing better and more about thinking differently.

- Question why the video exists, not how realistic it looks

Ask why a message needed to arrive as a video in the first place. In real organisations, urgent or sensitive requests usually follow established channels. When the format feels unnecessary, the medium itself becomes the first warning sign. - Look for mismatches between emotional tone and organisational stakes

Pay attention when calm, confident delivery clashes with high-risk or time-critical requests. Real pressure often shows friction. Perfect composure during urgent scenarios can be a sign of performance rather than reality. - Notice when interaction is replaced by performance

Be cautious when a video delivers instructions without space for questions, discussion, or verification. One-way communication that feels staged rather than conversational should raise suspicion. - Be alert to requests that arrive fully formed and discourage verification

Requests that bypass normal checks, reference urgency, or suggest “handling it later” are designed to shut down critical thinking and speed up compliance. - Treat unsolicited video as a risk signal

Unexpected video messages, especially those asking for action, should now be treated as potential threats and not trusted by default, regardless of how familiar the face appears. - Pause and think before you act

The most effective defence isn’t sharper vision, but better decision-making. Pausing to question context and intent matters more than spotting technical imperfections.

From a technical standpoint, many organisations now rely on specialised detection tools such as Reality Defender, Hive, Sensity, Truepic, and Intel’s FakeCatcher to identify signals associated with synthetic or manipulated media. These platforms analyse visual artefacts, audio inconsistencies, and metadata patterns that may indicate AI generation. While such tools are an important layer of defence, they have a fundamental limitation: they react to what already exists. As generative models improve, attackers adapt faster than detection rules can be updated. Detection will always be a step behind creation, which is why tools alone cannot carry the full burden of defence. That’s why unsolicited video itself should now be treated as a risk signal, not proof.

The real defence isn’t choosing between humans or tools - it’s understanding that behaviour is the root cause of failure. Tools can flag content. Only people can decide whether to act.

Which is exactly where the next question begins: how do you trainjudgment thatholds up under pressure? That’s where OutThink comes in.

How OutThink addresses awareness for AI video risk

By now, it should be clear that AI-generated video isn’t slipping through defences because people can’t see the problem. It slips through because people are forced to decide under pressure. That’s why awareness alone doesn’t work.

AI video risk is fundamentally behavioural, not visual. Most failures don’t happen because employees lack knowledge. They happen because authority cues, urgency, and familiar context override training in the moment. One-off sessions and generic awareness programmes rarely change how people react when it matters.

This is where OutThink is different.

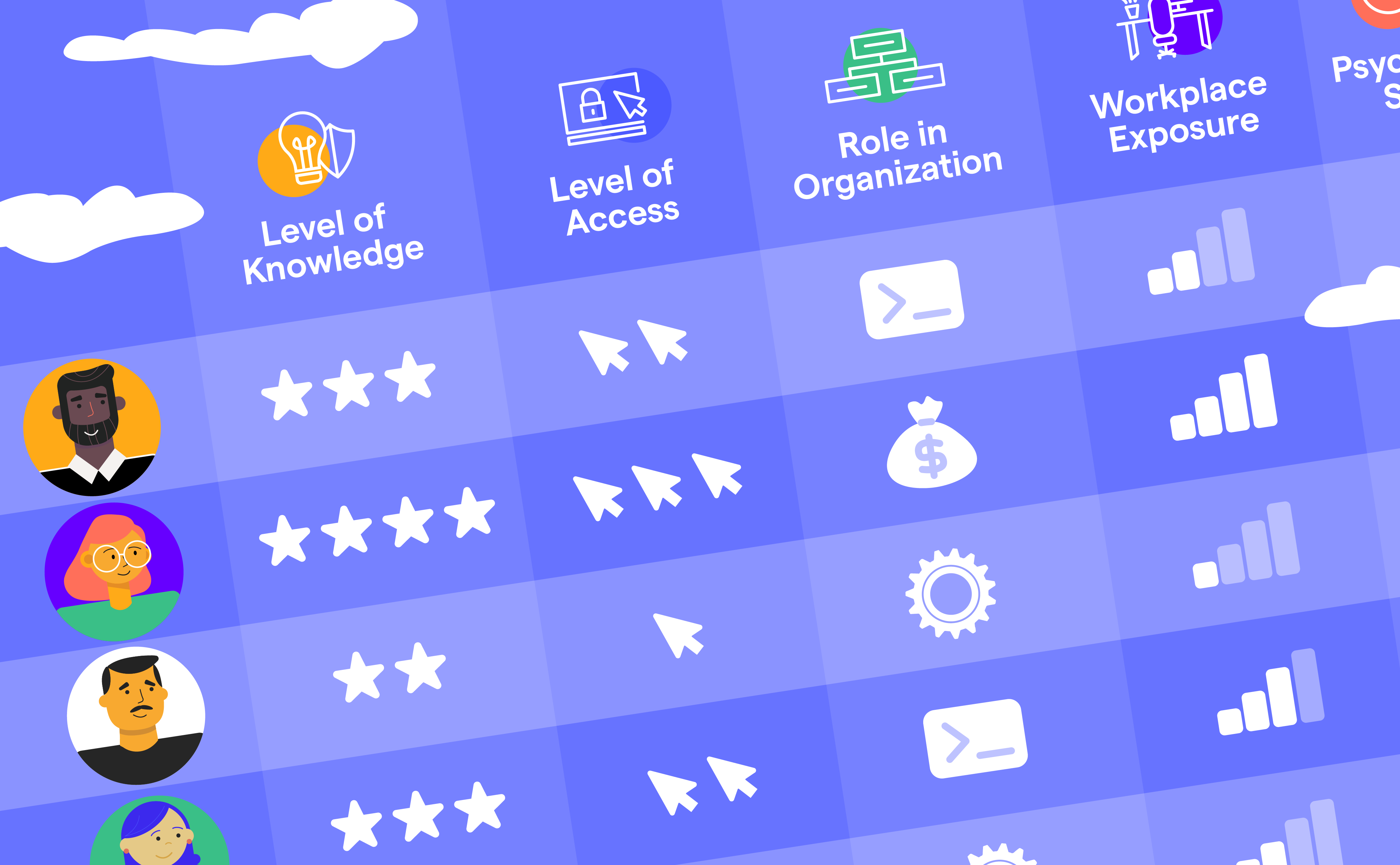

OutThink’s Human Risk Management platform focuses on how people actually behave, not what they can recall from training. Instead of delivering content and hoping it sticks, OutThink continuously measures and reduces risk by analysing real decision patterns.

How OutThink does this:

- Targets human behaviour - the root cause of most breaches

OutThink identifies risky behavioural patterns rather than treating incidents as isolated mistakes. This, in turn, enables early intervention before impact. - Adaptive and personalised training

Learning paths adjust in real time based on individual behaviour and risk signals, making training relevant, timely, and far more effective. - AI-powered feedback loops

Immediate, context-aware nudges help people correct their decisions while the experience is still fresh. This helps in reinforcing better judgment at the point of risk. - Quantifies human risk holistically

By combining identity, attitudes, and observed behaviour, OutThink gives leaders a clear, actionable view of organisational vulnerability. - Role-specific, behaviour-based engagement

Training aligns to roles and real threat scenarios, improving retention and real-world application.

As AI-generated video becomes more realistic, faster, and easier to deploy, a convincing video will no longer be proof of legitimacy. Detection tools will help, but they will always lag behind attackers. What endures is judgment - the ability to stop, question context, and interrupt the normal thought process when something doesn’t quite belong.

In that future, human judgment becomes the last durable security control.

Human judgement is the only saviour

AI-generated video will continue to improve, not in quality alone, but in fit. It will arrive faster, appear more casual, and blend more seamlessly into everyday work. As synthetic presence becomes easier to generate, seeing will no longer function as a reliable signal of authenticity.

Detection technologies will remain useful, but they will lag by design - reacting to patterns that attackers constantly refine. The real shift will be in how organisations prepare their people, not how well they train them to recognise artefacts.

Preparation means building judgment that survives pressure. It means helping people pause, question context, and interrupt familiar authority when something doesn’t quite belong. It means measuring how decisions unfold in real moments, not how well guidance is remembered in calm ones.

In a world where video can be manufactured on demand, human judgment becomes the most resilient layer of defense.

This is where Human Risk Management matters.

Platforms like OutThink focus on strengthening decision-making over time - measuring how people actually respond, reinforcing verification behaviour, and helping organisations reduce risk before incidents escalate into real damage.

Sources

- https://www.signicat.com/press-releases/fraud-attempts-with-deepfakes-have-increased-by-2137-over-the-last-three-year#:~:text=Despite%20the%20increase%20in%20AI,a%20multi%2Dlayered%20protection%E2%80%9D.

- https://deepstrike.io/blog/deepfake-statistics-2025

- https://www.theguardian.com/technology/2026/feb/03/deepfakes-ai-companions-artificial-intelligence-safety-report

- https://www.resemble.ai/wp-content/uploads/2025/04/ResembleAI-Q1-Deepfake-Threats.pdf

- https://cyble.com/knowledge-hub/deepfake-as-a-service-exploded-in-2025/

- https://pmc.ncbi.nlm.nih.gov/articles/PMC11157519/

- https://www.zerofox.com/glossary/deepfake-detection/

- https://www.zerofox.com/glossary/deepfake-detection/

- https://microblink.com/resources/glossary/video-deepfake-attacks/

- https://rsisinternational.org/journals/ijrias/articles/deepfake-detection-using-multimodal-ai/

- https://cacm.acm.org/research/as-good-as-a-coin-toss-human-detection-of-ai-generated-content/

- https://www.sciencedirect.com/science/article/pii/S2589004221013353

- https://arxiv.org/abs/2405.04097